How to Evaluate Media Reports about Medication Safety

When you read a headline like "New Study Links Blood Pressure Drug to Heart Attacks", it’s natural to panic. Maybe you’ve been taking that medication for years. Maybe your parent is on it. You might even consider stopping it right away. But here’s the hard truth: most media reports about medication safety are incomplete, misleading, or outright wrong. And the consequences? People stop life-saving drugs, delay necessary treatments, or panic over risks that don’t exist in real-world use.

Let’s be clear: medication safety is complex. It’s not just about whether a drug "causes" something. It’s about how often, under what conditions, and whether the event was preventable. Most news stories skip all of this. But you don’t need a medical degree to spot the red flags. You just need to know what to look for.

1. Distinguish Between Medication Errors and Adverse Drug Events

Too many reports treat these the same. They’re not.

A medication error is something that went wrong in the process-like a doctor prescribing the wrong dose, a pharmacist giving the wrong pill, or a nurse administering it at the wrong time. These are preventable. The National Coordinating Council for Medication Error Reporting and Prevention (NCC MERP) has a standardized scale for grading harm, from no harm to death.

An adverse drug event (ADE) is an injury caused by a drug, regardless of whether it was a mistake. Sometimes, even when everything is done perfectly, a drug can cause a bad reaction. That’s not a failure-it’s biology. For example, a blood thinner might cause bleeding in someone with a rare genetic condition. That’s an ADE, not a medication error.

Most media reports don’t make this distinction. If they don’t say whether the event was caused by human error or an unavoidable reaction, they’re leaving out half the story. Ask yourself: Is this about a mistake, or is it about how the body reacts to a drug?

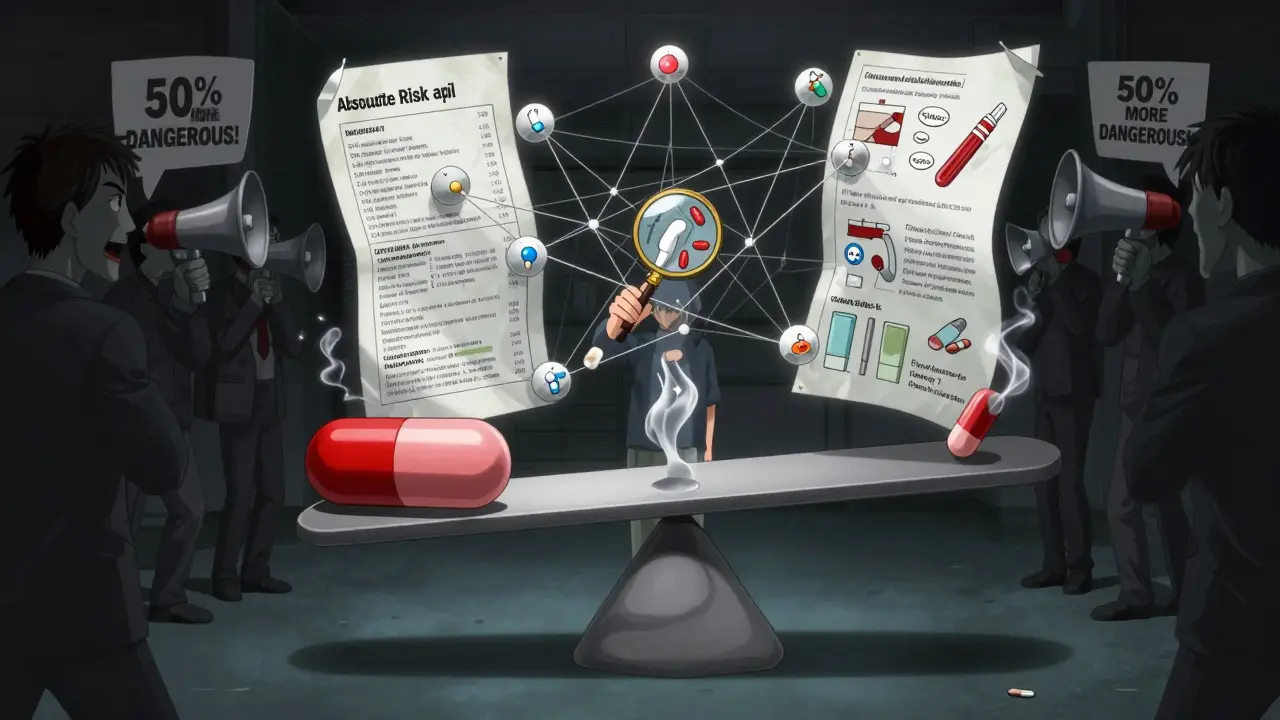

2. Demand Absolute Risk Numbers

Here’s how the media tricks you: they use relative risk.

Let’s say a study finds that Drug X increases the risk of a stroke by 50%. Sounds scary, right? But if the baseline risk is 2 in 10,000 per year, a 50% increase means it’s now 3 in 10,000. That’s still extremely rare. But you won’t hear that. The headline just says "50% higher risk"-and that’s enough to scare people into quitting.

A 2020 study in the BMJ found that major newspapers got this right 62% of the time. Cable news? Only 38%. Digital-only outlets? Just 22%. If a report doesn’t give you both the relative risk and the absolute risk, it’s not trustworthy.

Always ask: How many people out of 100, 1,000, or 10,000 actually experienced this? If they can’t tell you, the report is incomplete.

3. Check the Study Method

Not all studies are created equal. The way researchers collect data changes everything.

Some studies rely on spontaneous reporting-like the FDA’s FAERS database. This is where doctors, pharmacists, or even patients report side effects. Sounds good? It’s not. Less than 10% of actual adverse events get reported. And many reports are duplicates, incomplete, or unrelated to the drug. Yet, media outlets treat every entry as proof of causation.

Other studies use chart reviews. These look back at medical records. But they only catch 5-10% of actual medication errors. A 2020 study by Dr. David Bates showed that if you use a more thorough method-like direct observation-you find 10 times more errors. Yet, media reports rarely explain this.

The most efficient and accurate method? The trigger tool. It uses specific "triggers" in patient records-like a sudden drop in blood pressure or an abnormal lab result-to flag potential errors. It’s used in hospitals across the U.S. and Europe. If a report says it used a trigger tool, that’s a good sign. If it doesn’t say anything, assume it’s based on weak data.

4. Look for Confounding Factors

Did the study control for other variables? Probably not.

Take a study that says "Drug Y increases diabetes risk." But what if everyone in the study was overweight, sedentary, and on other medications that also raise blood sugar? That’s not Drug Y’s fault-it’s the lifestyle.

A 2021 audit in JAMA Internal Medicine found that only 35% of media reports mentioned whether the study controlled for confounding factors. That means 65% of stories are misleading you into blaming one drug when the real issue is something else.

Good studies compare groups that are nearly identical except for the drug. If the report doesn’t say how they matched the groups-or if it just says "we looked at 500 patients"-then it’s not science. It’s guesswork.

5. Verify the Source

Did the report cite the original study? Or did it just say "a new study found..."?

Always go back to the source. Check if the study was published in a peer-reviewed journal. Look up the journal’s reputation. Is it NEJM or Lancet? Or is it a low-tier journal with lax review standards?

Also, check where the data came from. The FDA’s FAERS database? The WHO’s Uppsala Monitoring Centre? These are real, but they’re not proof of causation. They’re signals. A spike in reports doesn’t mean the drug is dangerous-it might mean more people are using it, or doctors are more aware.

Here’s a quick trick: search for the study title on clinicaltrials.gov or the journal’s website. If the article doesn’t link to it-or worse, just says "a study in a medical journal"-run.

6. Watch for Sensational Language

Words like "deadly," "dangerous," "toxic," or "linked to" are red flags.

Scientific writing uses "associated with," "increased risk," or "potential link." Media uses fear. A 2023 analysis by the National Patient Safety Foundation found that 68% of social media posts about medication safety used alarmist language. Instagram and TikTok were the worst.

Even reputable outlets do it. Headlines like "This Common Drug Could Kill You" are designed for clicks, not clarity. If the article doesn’t explain the actual risk level, the tone is the first clue something’s off.

7. Compare with Official Guidelines

What do experts actually recommend?

The Institute for Safe Medication Practices (ISMP) publishes an annual list of dangerous abbreviations and dosing errors. The American Society of Health-System Pharmacists (ASHP) has clear guidelines on how to monitor drug safety after approval. The FDA has a whole document on best practices for reporting pharmacovigilance data.

If the media report contradicts these, it’s likely wrong. For example, ISMP says never to use "U" for units-it can be mistaken for "0" or "4." If a report blames a medication error on "U" being used, that’s accurate. If it blames the drug itself? That’s misleading.

Check the ISMP website or ASHP guidelines. You don’t need to read the whole thing. Just scan for the key points. If the report aligns with them, it’s more credible.

8. Remember: Reporting ≠ Causation

This is the most important thing.

Just because someone took a drug and then had a heart attack doesn’t mean the drug caused it. Maybe they had a family history. Maybe they smoked. Maybe they skipped their other meds.

Spontaneous reporting systems like FAERS collect all events-whether linked or not. The FDA itself says: "Reports to FAERS do not necessarily indicate causation." But you’d never know that from the news.

Look for phrases like "in association with," "observed in," or "during treatment." Avoid reports that say "caused," "led to," or "resulted in." Those are almost always wrong.

9. Be Wary of AI-Generated Reports

AI is getting worse at this.

A 2023 Stanford study found that 65% of medication safety articles written by large language models contained serious factual errors. They’d mix up drug names, misstate risk percentages, or invent studies that don’t exist.

And AI-generated content is everywhere now-on blogs, news aggregators, even social media ads. If you read it on a site you’ve never heard of, or it sounds too perfect, too simple, or too dramatic, be extra skeptical.

10. What Should You Do?

Don’t stop your meds because of a headline.

Here’s what to do instead:

- Wait 48 hours. If it’s real, the story will still be there tomorrow.

- Find the original study. Use Google Scholar or PubMed.

- Check the sample size, control group, and duration.

- Look for absolute risk numbers.

- Compare with ISMP or ASHP guidelines.

- Call your pharmacist or doctor. Ask: "Is this something I should be worried about?"

Most of the time, you’ll find the risk is tiny-or the issue is something else entirely.

Medication safety isn’t about fear. It’s about understanding. And you have the tools to cut through the noise.

Frequently Asked Questions

Can I trust media reports that cite "a new study"?

No-not without checking the details. "A new study" is a vague phrase used to make stories sound urgent. Always find the original source. Look for the journal name, authors, and whether it was peer-reviewed. If the report doesn’t link to it or name the study, treat it as unreliable.

Why do some drugs get pulled from the market after media coverage?

It’s rarely because of the media. Drugs are pulled when regulatory agencies like the FDA or EMA see a consistent pattern of serious harm across multiple studies and real-world data. Media coverage might speed up public concern, but decisions are based on rigorous analysis-not headlines. For example, Vioxx was withdrawn after a large clinical trial showed a clear increase in heart attacks, not because of news stories.

Is it true that most adverse reactions aren’t reported?

Yes. Experts estimate that only 5-10% of adverse drug events are reported to systems like the FDA’s FAERS. This is because many are mild, go unnoticed, or aren’t connected to the drug by the patient or doctor. That’s why spontaneous reporting systems are used to detect signals-not to measure exact risk.

How do I know if a medication is safe for me personally?

Ask your pharmacist or doctor. They have access to your medical history, current medications, allergies, and genetic factors. A drug that’s risky for one person might be perfectly safe for another. General media reports can’t account for your individual situation. Personalized care beats mass media.

Should I avoid medications that have "black box" warnings?

Not necessarily. A black box warning is the strongest warning the FDA can give, but it doesn’t mean the drug is unsafe. It means the risk is serious and requires careful monitoring. Many life-saving drugs-like certain antidepressants or cancer treatments-have black box warnings. The key is whether the benefit outweighs the risk for your condition. Never stop a drug because of a warning without talking to your doctor.

Comments

Rachele Tycksen

March 23, 2026 AT 08:07i just read this headline about a blood pressure med and almost threw my pills out 😅 like... i didn't even finish the article. then i remembered this post. thanks for the reality check. i'm gonna wait 48 hours before panicking. also, typos are inevitable. forgive me.

Grace Kusta Nasralla

March 23, 2026 AT 14:17We are all drowning in data, yet starved of meaning. The media doesn't lie-it simply rearranges the silence. What is not said? The body's intelligence? The quiet dignity of slow, systemic harm? We treat pills like villains when the real disease is our inability to tolerate uncertainty.

Aaron Sims

March 24, 2026 AT 04:51Oh, so now we're supposed to trust 'peer-reviewed' journals? LOL. Who funds them? Big Pharma? The FDA? The same people who told us Vioxx was 'safe'? And don't get me started on 'trigger tools'-that's just corporate jargon for 'we're too lazy to actually watch people take their meds.'

Stephen Alabi

March 25, 2026 AT 03:09The fundamental flaw in contemporary pharmacovigilance lies in the epistemological disconnect between spontaneous reporting systems and causal inference. One cannot derive causality from correlation, particularly when the signal-to-noise ratio in FAERS is estimated at 0.07. Furthermore, the conflation of medication error with adverse drug event constitutes a category error of the highest order.

Kevin Siewe

March 26, 2026 AT 04:38You're not alone in feeling overwhelmed. This post is gold. I always tell my patients: if a headline makes your heart race, pause. Breathe. Go to PubMed. Type in the drug name + 'clinical trial'. You'll find the real numbers. And if you're still unsure? Call your pharmacist. They're the unsung heroes of medication safety.

Chris Farley

March 27, 2026 AT 13:33This whole thing is a socialist distraction. The media doesn't lie-THEY'RE PAID TO LIE. Who benefits from making people afraid of their prescriptions? The government. The WHO. The globalist elites who want you docile and dependent. Stop taking your meds. Go primal. Eat meat. Lift weights. Your body doesn't need Big Pharma's poison.

Jesse Hall

March 29, 2026 AT 07:16This is so helpful! 💪 Seriously, I used to scroll past these headlines and freak out. Now I check the study, look for absolute risk, and call my pharmacist. It’s like a superpower. Thanks for breaking it down so clearly! 🙌

Donna Fogelsong

March 30, 2026 AT 06:05The system is rigged. FAERS is a joke. Trigger tools? Corporate PR. They don't want you to know that 90% of ADEs are preventable AND covered up. The FDA has been bought. Your 'medication safety' is a marketing campaign. You think you're safe? You're just another data point in their profit algorithm.

Sean Bechtelheimer

March 30, 2026 AT 15:56lol i just saw a tiktok that said 'this drug kills 1 in 3 people' so i stopped my blood pressure med 🤡 now i'm dizzy. but hey, at least i'm 'awake' right? 🤔

Seth Eugenne

April 1, 2026 AT 10:34I really appreciate how calm and clear this is. I work in a clinic and see patients panic every time a new headline drops. This breakdown is exactly what we need to hand out. Thank you for taking the time to write this. You’re helping people stay safe, not scared. 🙏

winnipeg whitegloves

April 1, 2026 AT 10:53Man, I live in Winnipeg and we get snowed under with bad health news every winter. This post is like a warm blanket in a blizzard. Absolute risk numbers? Yeah, that’s the stuff. I’m gonna print this and stick it on my fridge next to the recipe for poutine.

Korn Deno

April 2, 2026 AT 23:24The deeper question isn't whether the media misrepresents drug risks-it's why we let it. We crave certainty in a world of probabilities. We want a villain to blame. But biology doesn't care about headlines. The truth is messy. Complex. Uncomfortable. And that's why we'd rather believe a lie than sit with the ambiguity.